7 Minuten

In the early 1980s, the Room Acoustics team at Ircam conducted extensive research on the characterization of the acoustical quality of performance venues such as auditoria, theaters or opera houses. The effect of room reverberation in these spaces can be described from several perspectives:

- From an architectural standpoint, referring to geometric and physical attributes of the room, such as its shape, size, and wall material.

- From an acoustician’s point of view, in terms of objective metrics (such as the reverberation time) calculated from an impulse response measured in the venue.

- Or from a perceptual viewpoint: expressing the audience’s sensations such as “intimacy” or “sweetness,” much like one would describe the timbre of a sound or the taste of a wine.

Focusing on the latter perceptual approach, the team, led by Jean-Pascal Jullien and Olivier Warusfel, identified a small set of subjective parameters that characterize the music concert listening experience. Using these parameters (“the ingredients”), they elaborated a perceptual model (“the cooking recipe”) to control an artificial reverberator.

At the beginning of the 1990s, multichannel digital reverbs became feasible thanks to the Feedback Delay Network (FDN) architecture designed by Jean-Marc Jot, and the Max environment was developed. This led to the birth of the Spat project: a spatialized artificial reverberator that runs in real-time within Max and is controlled by a perceptual interface. This high-level control interface is ideal for artistic productions, as it provides an intuitive way to configure the reverb engine and allows for seamless interpolation between different acoustic qualities. Remarkably, the Spat framework was also implemented using an object-based description of the room effect, decades before the development of mainstream object-based formats in use today: direct sound and early reflections are assigned a precise position (and orientation) in space, while late reverb is encoded as a diffuse “bed”. This provides a universal spatial scene representation and ensures layout agnosticity, i.e., the content is automatically adapted to any loudspeaker (or headphone) playback configuration.

Over the years and successive versions of Spat, these unique characteristics have been perpetuated. However, the Spat library has also evolved to incorporate major new developments that have revolutionized the world of spatial audio in the past decades.

The following paragraphs highlight some of the key features of the library.

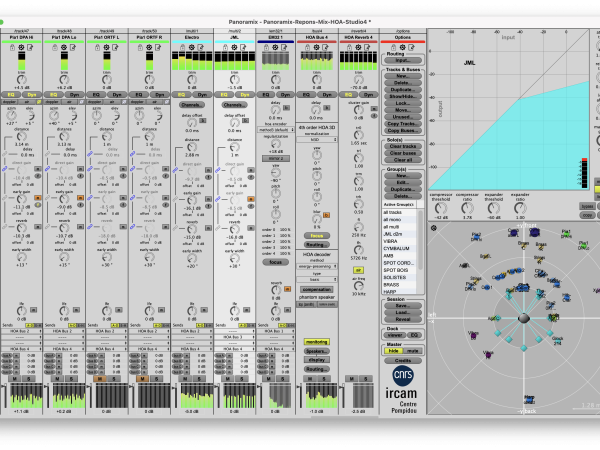

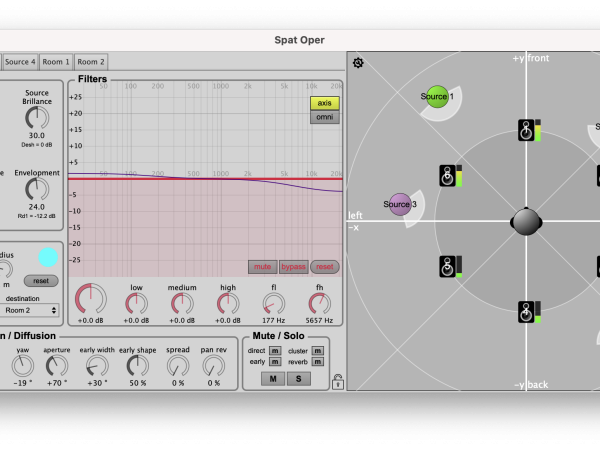

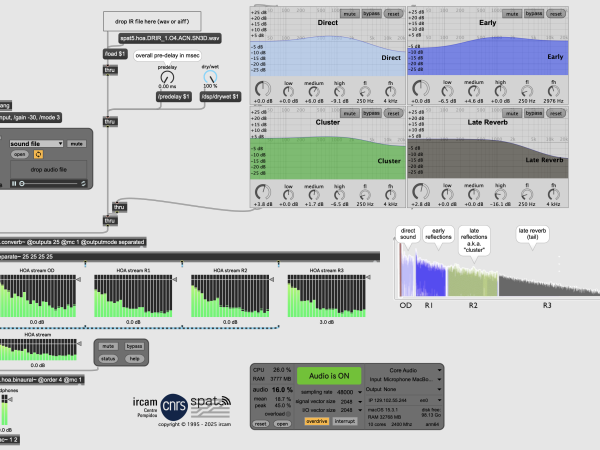

Spat5.oper implementing a perceptual model for the control of the (virtual) room acoustical quality.

Artificial reverberation

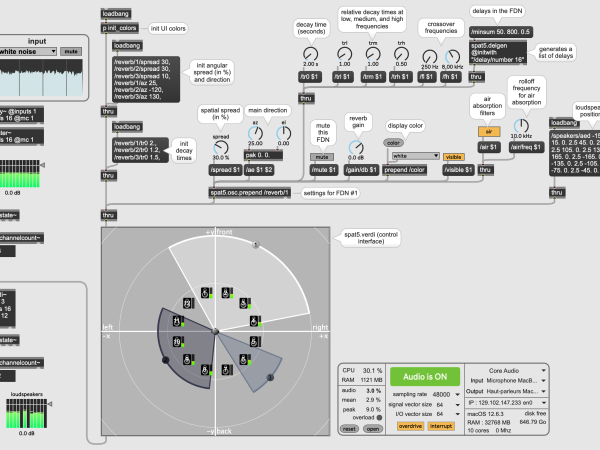

A key component of Spat is its room engine, powered by a FDN unit that produces natural-sounding reverb effects. This module is highly scalable: while it typically uses 8 to 16 internal feedback channels for optimal computational efficiency, this number can be increased to achieve extremely dense or long reverbs (optionally with infinite reverberation time). Additionally, the decay rate can be adjusted across multiple frequency bands. Although three bands (low/mid/high) are usually sufficient to emulate most conventional spaces, using a finer spectral resolution (up to 30 bands) unlocks more experimental sound design possibilities.

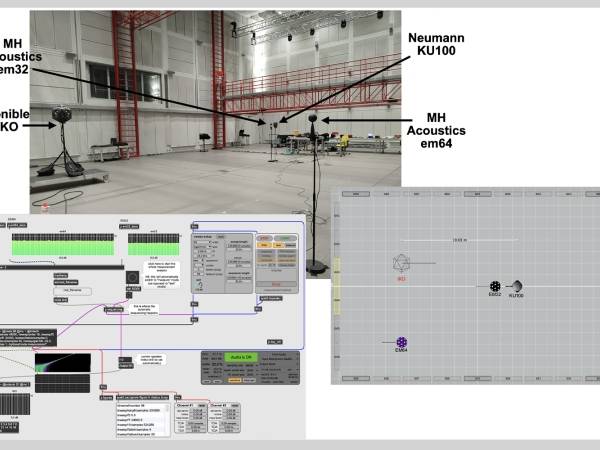

In addition to algorithmic reverberation, the convolution-based technique is also supported. For instance, the spat5.converb~ object is a convolution engine implementing efficient and zero-latency FFT algorithms. It simulates the reverberation of a space, based on its multichannel impulse response (IR). The object also offers some tuning and creative options: the IR can be split into multiple temporal segments (direct sound/early reflections/late reverb), and spectral or spatial effects can be applied to each segment, enabling the design of a completely new IR. The Spat package also includes a utility tool (spat5.smk∼) that uses the swept-sine technique to capture IRs of reverberant spaces.

Example of an IR measurement session with spat5.smk~, in Ircam’s Espace de Projections

Spat5.converb~ convolving an Ambisonic IR, with parametric filters applied to the direct/early/late parts of the IR.

Directional reverberation zones in spat5.verdi

Panning

There are a variety of panning algorithms, each with its own strengths and limitations. For example, VBAP is popular for its ability to produce “point-like” virtual sources, while Ambisonics is commonly used to deliver immersive ambiences. For sure, there is no "one size fits all" spatialization technique. Therefore, Spat supports all state-of-the-art panning techniques, and offers the user the possibility to mix them depending on the nature of the sound material or the desired spatial effect. In addition to VBAP and Ambisonics, the toolbox also includes Wave-Field Synthesis (WFS). Any loudspeaker configuration, whether 2D or 3D, can potentially be used with WFS, although certain spatial effects, like focused sound sources (located inside the listening area), require sufficiently dense speaker arrays due to spatial aliasing constraints. The panning module (spat5.pan~) further implements binaural synthesis, with optional simulation of nearfield effects, conventional stereo techniques (AB, XY, MS), and distance-based panning approaches (such as DBAP and KNN).

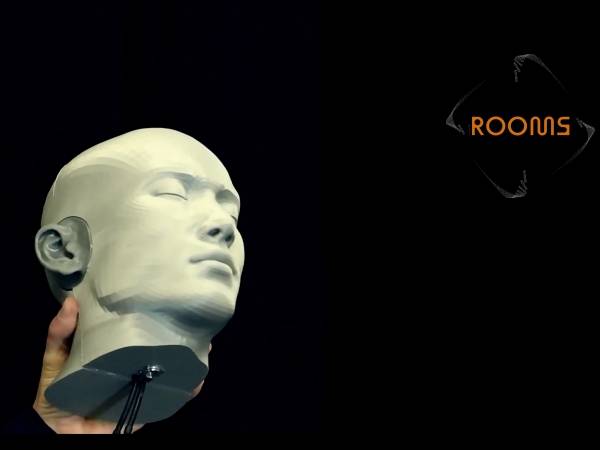

Arbitrary loudspeaker layouts can be monitored through headphones using spat5.virtualspeakers∼. This tool employs HRTF to convert any multichannel stream into a binaural stereo track, preserving the spatial characteristics of the original sound scene.

Ambisonic Workflow

Higher-Order Ambanisonic (HOA) technologies have attracted significant interest from both academia and the media industry since the 2000s. State-of-the-art tools to leverage the HOA production workflow have been integrated into the Spat library. This includes in particular:

- A-to-B format encoders that transcode microphone signals into the Ambisonic domain. Popular first-order (Sennheiser Ambeo, Soundfield, Tetramic, etc.) and higher-order microphone arrays (e.g., MH Acoustics em32 and em64, or Zylia ZM1) are supported.

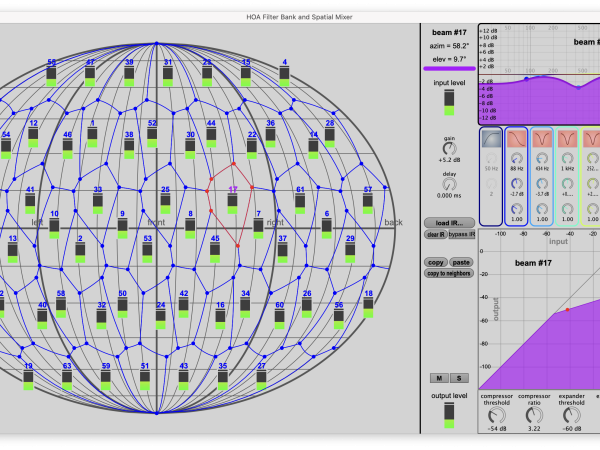

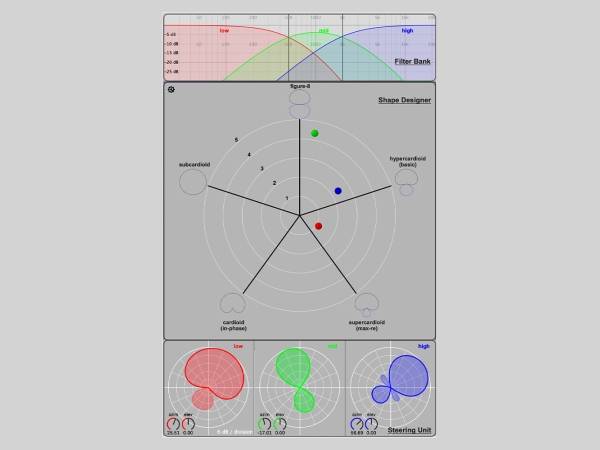

- An extensive collection of tools for applying spatial FX to Ambisonic streams, such as 3D rotations, mirroring, directional enhancement (or “focus”), warping (squeezing or stretching the scene towards a given direction), blurring, etc. Ambisonic beamforming (spat5.hoa.beam~) and spatial filter bank are other available techniques that allow localized sound transformations in certain areas of space. This collection of Max objects offers a wide range of creative manipulations, particularly useful when processing soundfield recordings.

- A variety of decoders which can cope with regular or irregular loudspeaker layouts, such as the mode-matching Ambisonic decoder (MMAD), energy-preserving (EPAD), All-Round Ambisonic Decoding (AllRad), and more.

Ambisonics streams can also be monitored binaurally using the so-called magnitude least-squares (MagLS) technique which guarantees optimal timbre fidelity.

Spatially localized sound effects applied to an Ambisonic stream using spat5.hoa.beamix~

Directivity designer to shape steerable beampatterns compatible with Ambisonic microphone or loudspeaker arrays.

Object-based audio

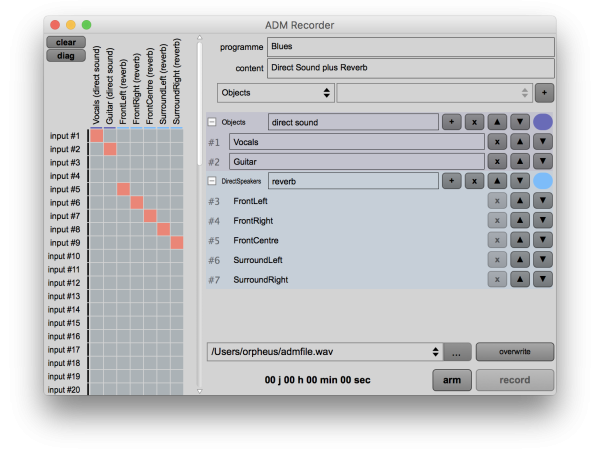

Over the past decade, there has been a surge of interest in the object-based approach for creating and broadcasting multichannel audio. Several interchange formats have been proposed, with the Audio Definition Model (ADM) being a notable open standard published by the ITU and EBU, and also serving as the foundation for Dolby Atmos master files. ADM is designed to describe object-oriented media encapsulated in a Broadcast Wave Format (BWF) container. ADM prescribes a set of metadata (such as time-varying position and gain of audio objects) encoded in the BWF file, in an XML chunk. The Spat toolbox offers a complete production chain for BWF-ADM files. For instance, spat5.adm.record∼ enables the creation of BWF files with embedded spatialization metadata, and spat5.adm.renderer∼ handles the real-time rendering/monitoring of ADM media across various reproduction setups (headphones or loudspeaker layouts). Other spat5 modules also enable basic interactivity with the object-based content.

Configuring an ADM session with two objects and a reverb bed in spat5.adm.record~

Creative tools for spatial authoring

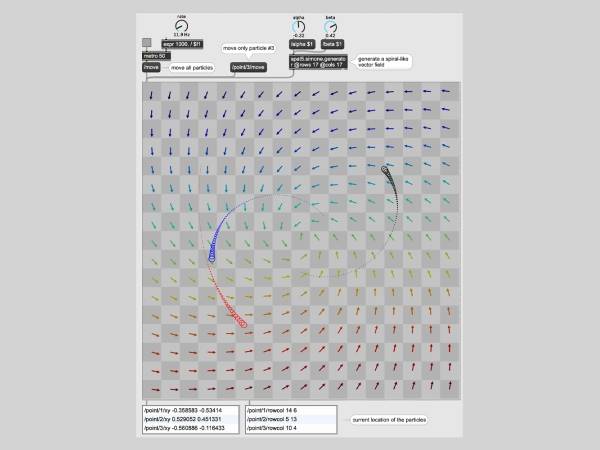

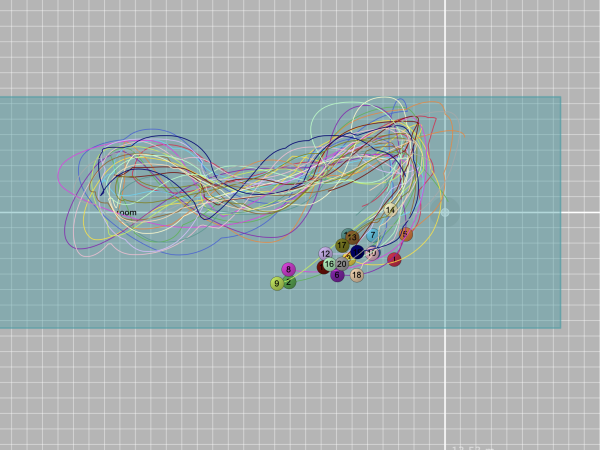

Spatial sound production most often involves the manipulation of geometric data, either for the configuration of the reproduction system or for more creative purposes. In particular, multichannel composition frequently uses the paradigm of spatial trajectories, in which the movements of sound sources are apprehended as paths, curves, parametric or random patterns in space. To do so, the Spat library comes with a collection of utility tools to generate, transform and manipulate geometric data: spat5.trajectories features 50+ parametric equations for 2D or 3D curves; spat5.boids implements a classic simulation of flock of birds; spat5.simone computes the motion of “particles” inside a vector field, producing fluid and realistic movements of autonomous agents; the OSCar plugin allows to communicate coordinate data to/from a DAW automation system; etc.

Besides these control-rate objects, the package also includes a number of audio FX potentially useful in multichannel composition, such as a simulator of Leslie speaker (with rotating horn and woofer) or a spatial granulator engine that distributes sound grains in space.

Spat5.simone with three particles (red, blue, and black) getting animated by the underlying vector field.

Flock of autonomously moving sources in spat5.boids.

Key characteristics

- 300+ objects supporting the state-of-the-art techniques for panning (Ambisonics, WFS, binaural, etc.) and artificial reverberation,

- Modularity and versatility: from user-friendly GUI (e.g., the spat5.panoramix workstation) – that can be configured without any prior knowledge of the Max programming language – and down to low-level modules that can be patched and customized freely,

- Highly optimized DSP engine. Allowing the real-time rendering of complex scenes with a large number of elements,

- Fully controllable via the OSC protocol,

- Large set of utility tools for manipulating multichannel streams or files,

- Freely available via the Ircam Forum

Summary

In short, Ircam Spat is a comprehensive library for sound spatialization and artificial reverberation, implemented in the Max environment. Featuring over 300 modules, the toolkit can be used in a wide range of immersive productions. The package also comes with a wealth of documentation and tutorials.

Stimulated by research findings, technological innovations and artistic challenges, Spat remains a constantly evolving framework. Short-term perspectives focus in particular on the capture, analysis, and manipulation of 3D spatial room impulse responses in the HOA domain, as well as the exploitation of object-based audio formats for interactive 6-degree-of-freedom navigation for VR/AR applications.

Thibaut Carpentier

Thibaut Carpentier is an R&D engineer at IRCAM (Institut de Recherche et de Coordination Acoustique/Musique), Paris. After studying acoustics at the Ecole Centrale and signal processing at the ENST Telecom Paris, he joined CNRS (French National Centre for Scientific Research) and the Acoustics & Cognition Research Group in 2008. His research is focused on spatial audio, room acoustics, artificial reverberation, and computer tools for 3D composition and mixing. In recent years, he has been responsible for the development of Ircam Spat, he created the 3D mixing workstation Panoramix and he contributed to the design and implementation of a 350-loudspeaker array for holophonic sound reproduction in Ircam's concert hall.

Article topics

Article translations are machine translated and proofread.

Artikel von Thibaut Carpentier

Thibaut Carpentier

Thibaut Carpentier